Back to all publications...

Exploration and preference satisfaction trade-off in reward-free learning

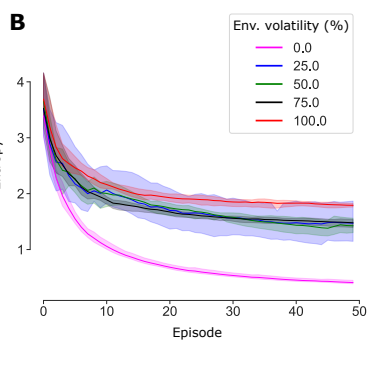

Biological agents have meaningful interactions with their environment despite the absence of immediate reward signals. In such instances, the agent can learn preferred modes of behaviour that lead to predictable states – necessary for survival. In this paper, we pursue the notion that this learnt behaviour can be a consequence of reward-free preference learning that ensures an appropriate trade-off between exploration and preference satisfaction. For this, we introduce a model-based Bayesian agent equipped with a preference learning mechanism (pepper) using conjugate priors. These conjugate priors are used to augment the expected free energy planner for learning preferences over states (or outcomes) across time. Importantly, our approach enables the agent to learn preferences that encourage adaptive behaviour at test time. We illustrate this in the OpenAI Gym FrozenLake and the 3D mini-world environments – with and without volatility. Given a constant environment, these agents learn confident (i.e., precise) preferences and act to satisfy them. Conversely, in a volatile setting, perpetual preference uncertainty maintains exploratory behaviour. Our experiments suggest that learnable (reward-free) preferences entail a trade-off between exploration and preference satisfaction. Pepper offers a straightforward framework suitable for designing adaptive agents when reward functions cannot be predefined as in real environments.

Noor Sajid, Panagiotis Tigas, Alexey Zakharov, Zafeirios Fountas, Karl Friston

ICML 2021 Workshop on Unsupervised Reinforcement Learning

[paper]