Reproducibility and Code

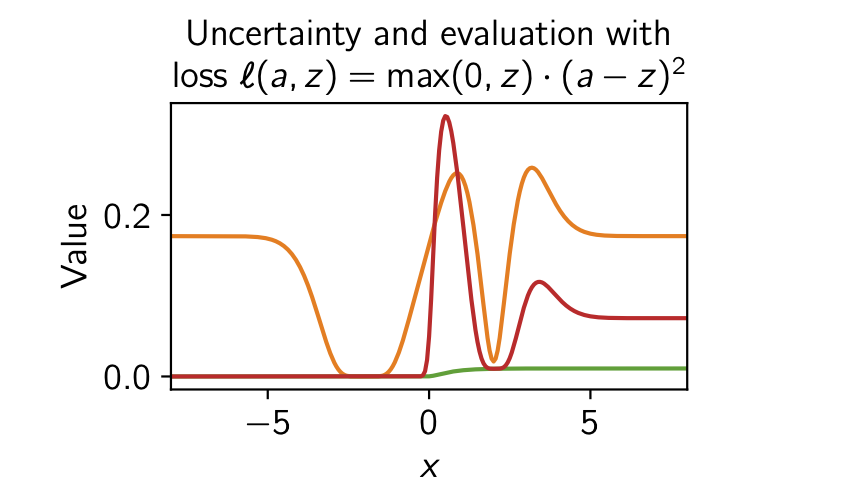

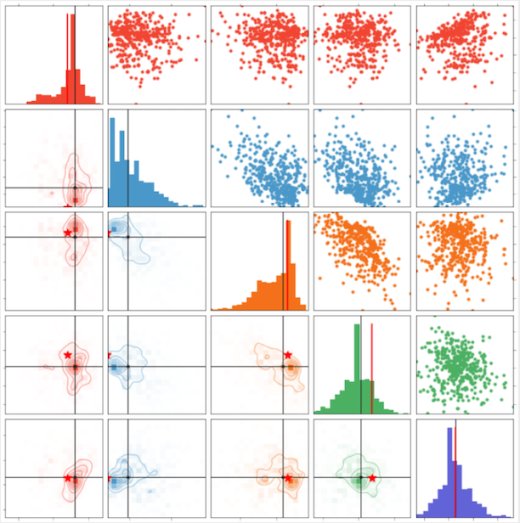

Rethinking aleatoric and epistemic uncertainty

This repo contains code for Rethinking aleatoric and epistemic uncertainty (ICML 2025).

CodeFreddie Bickford Smith

AI models collapse when trained on recursively generated data

This is the code repository for the paper “AI models collapse when trained on recursively generated data”.

CodeIlia Shumailov, Zakhar Shumaylov, Yiren Zhao, Nicolas Papernot, Ross Anderson, Yarin Gal

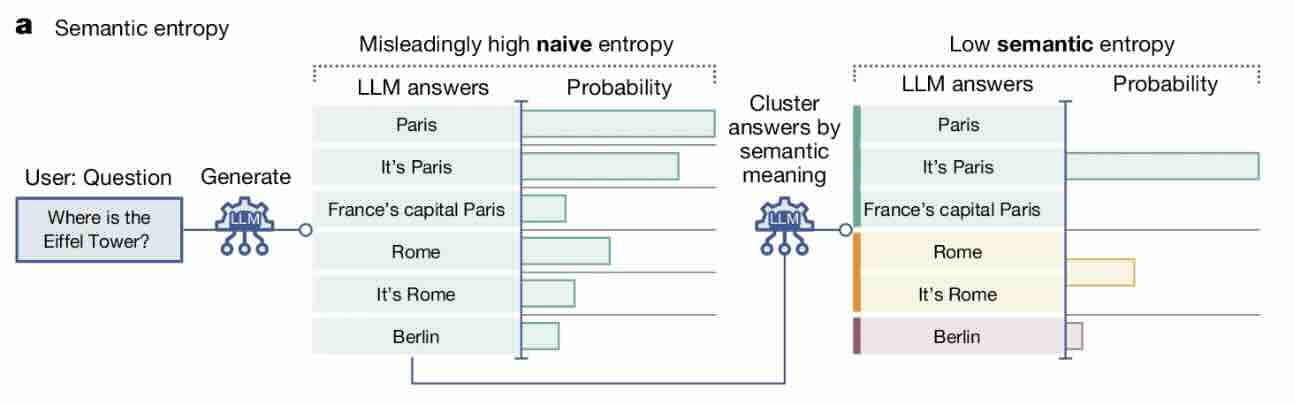

Detecting hallucinations in large language models using semantic entropy

This is the code repository for the paper “Detecting hallucinations in large language models using semantic entropy”.

CodeSebastian Farquhar, Jannik Kossen, Lorenz Kuhn, Yarin Gal

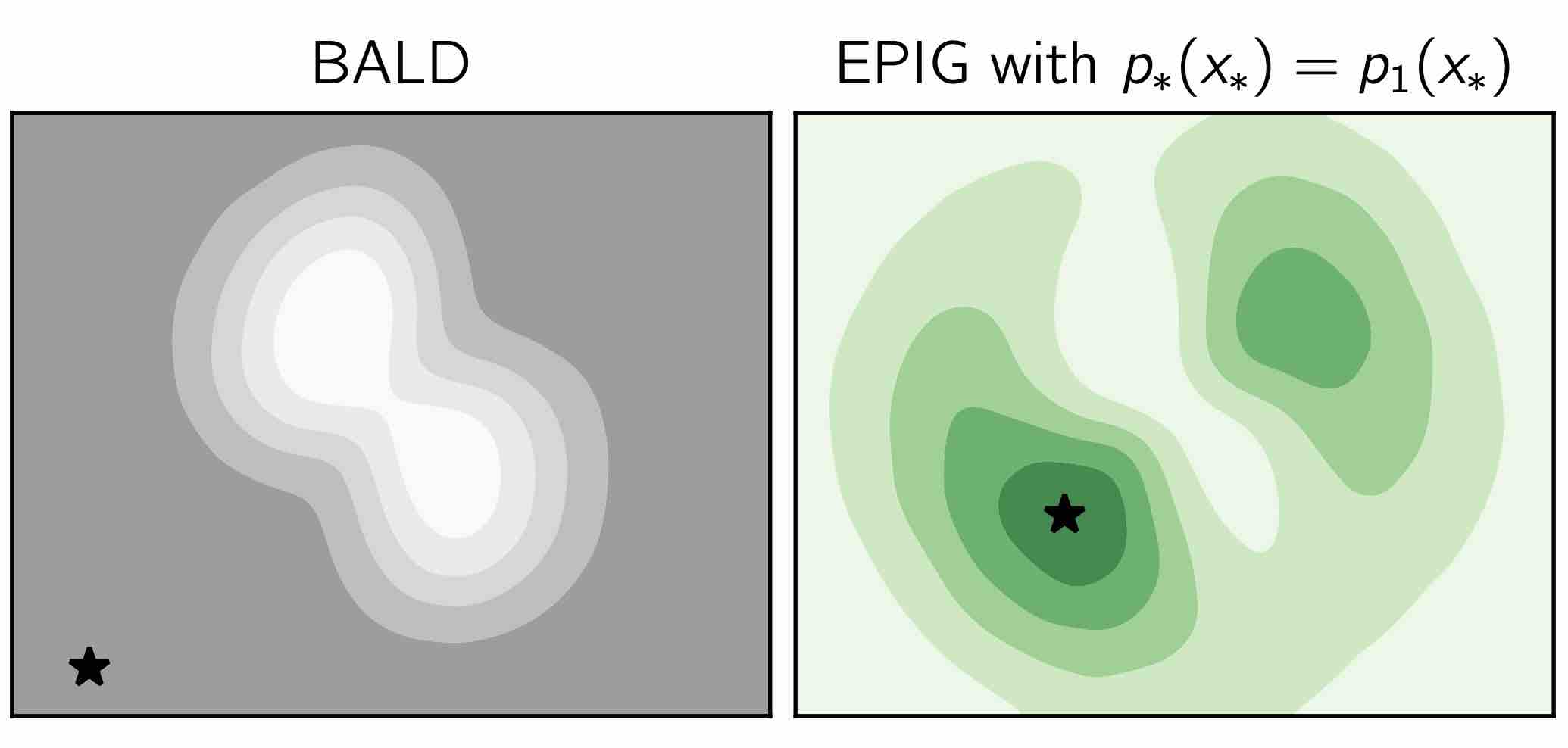

Bayesian active learning with EPIG data acquisition

This repo contains code for two papers:

- Prediction-oriented Bayesian active learning (AISTATS 2023)

- Making better use of unlabelled data in Bayesian active learning (AISTATS 2024)

Freddie Bickford Smith

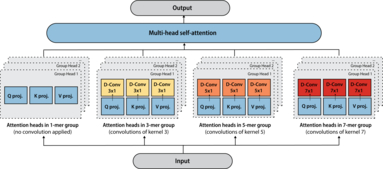

Tranception: protein fitness prediction with autoregressive transformers and inference-time retrieval

This is the official code repository for the paper “Tranception: protein fitness prediction with autoregressive transformers and inference-time retrieval”. The repository also includes the full ProteinGym benchmarks.

CodePascal Notin

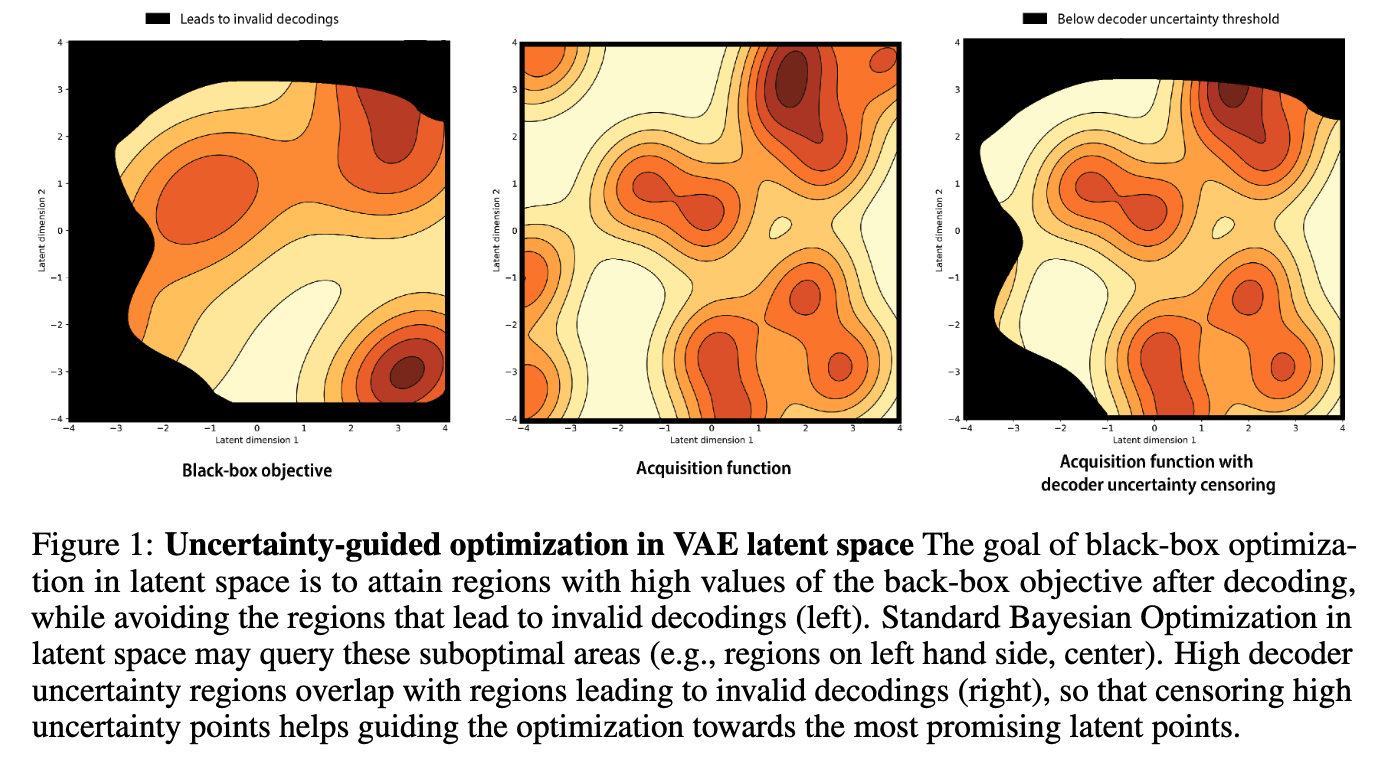

Improving black-box optimization in VAE latent space using decoder uncertainty

Official repository for our paper “Improving black-box optimization in VAE latent space using decoder uncertainty”. The codebase provides an example application in molecular optimization with JT-VAE for both Gradient Ascent and Bayesian Optimization.

CodePascal Notin

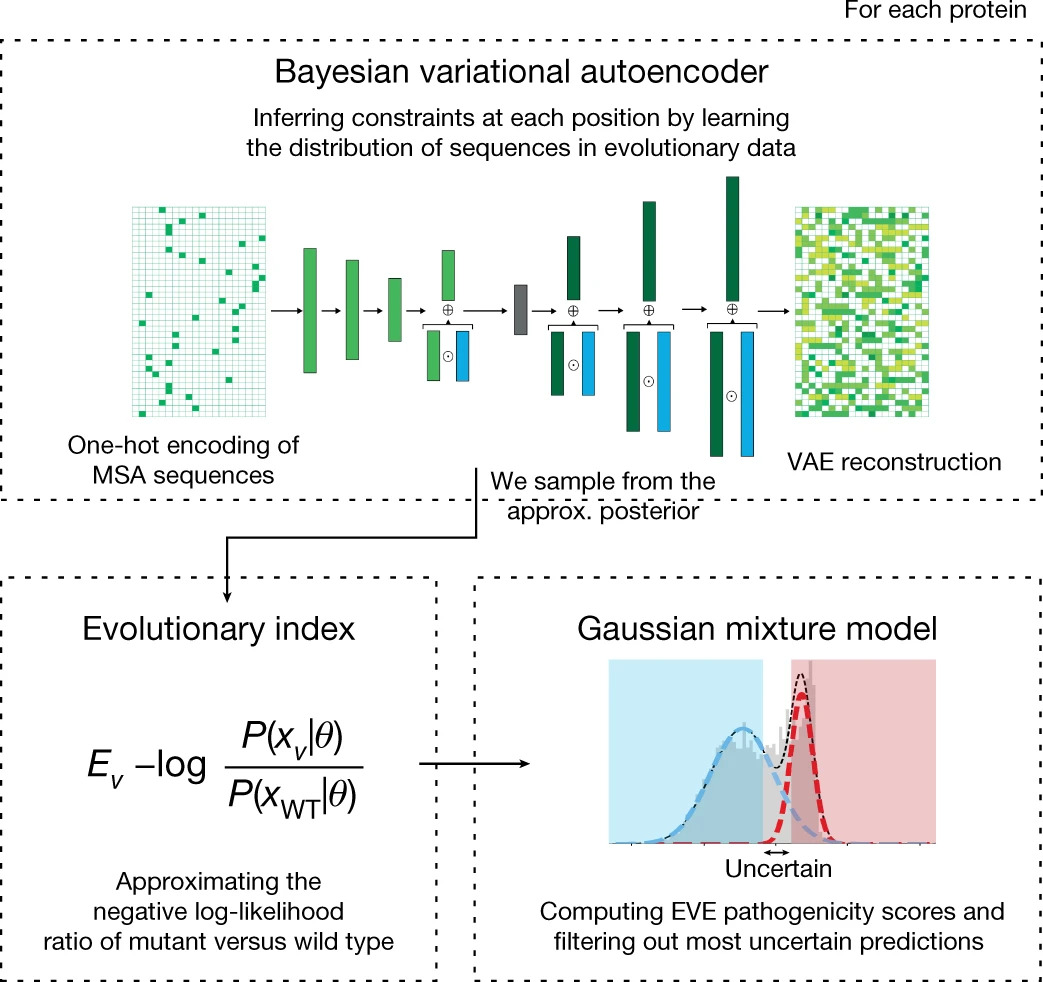

Disease variant prediction with deep generative models of evolutionary data

Official repository for our paper “Disease variant prediction with deep generative models of evolutionary data”. The code provides all functionalities required to train the different models leveraged in EVE (Evolutionary model of Variant Effect), as well as the ones to score any mutated protein sequence of interest.

CodePascal Notin

OATomobile: A research framework for autonomous driving

OATomobile is a library for autonomous driving research. OATomobile strives to expose simple, efficient, well-tuned and readable agents, that serve both as reference implementations of popular algorithms and as strong baselines, while still providing enough flexibility to do novel research.

CodeAngelos Filos, Panagiotis Tigas

Slurm for Machine Learning

Many labs have converged on using Slurm for managing their shared compute resources. It is fairly easy to get going with Slurm, but it quickly gets unintuitive when wanting to run a hyper-parameter search. In this repo, Joost van Amersfoort provides some scripts to make starting many jobs painless and easy to control.

CodeJoost van Amersfoort

Torch Memory-adaptive Algorithms (TOMA)

A collection of helpers to make it easier to write code that adapts to the available (CUDA) memory. Specifically, it retries code that fails due to OOM (out-of-memory) conditions and lowers batchsizes automatically.

To avoid failing over repeatedly, a simple cache is implemented that memorizes that last successful batchsize given the call and available free memory.

CodeAndreas Kirsch

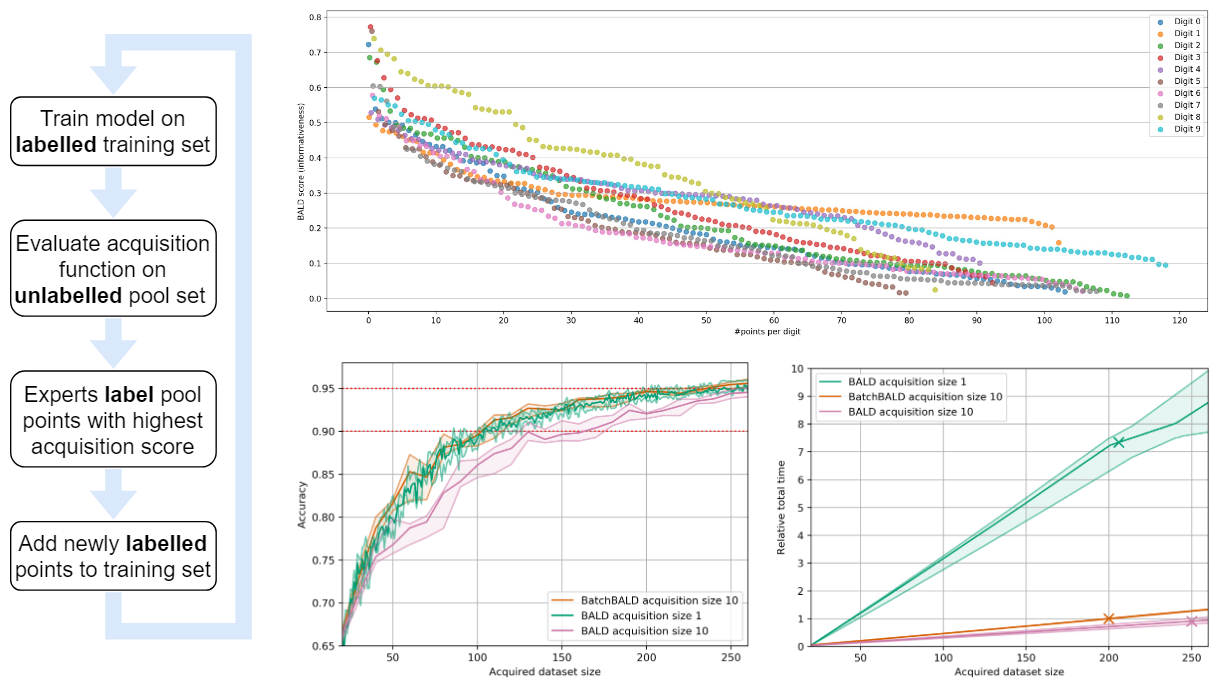

Reusable BatchBALD implementation (active learning)

Clean reimplementation of “BatchBALD: Efficient and Diverse Batch Acquisition for Deep Bayesian Active Learning”.

Code, PublicationAndreas Kirsch

Getting high accuracy on CIFAR-10 is not straightforward. This self-contained script gets to 94% accuracy with a minimal setup.

Joost van Amersfoort wrote a self-contained, 150 line script that trains a ResNet-18 to ~94% accuracy on CIFAR-10. Useful for obtaining a strong baseline with minimal tricks.

CodeJoost van Amersfoort

Machine Learning Summer School (MLSS) Moscow: Bayesian Deep Learning 101

Slide decks from the Bayesian Deep Learning talks at the Machine Learning Summer School (MLSS) Moscow, with uncertainty demoes and practical tutorials in sampling functions and active learning (practical credit: Ivan Nazarov).

CodeYarin Gal

Putting TensorFlow back in PyTorch, back in TensorFlow (differentiable TensorFlow PyTorch adapters)

Do you have a codebase that uses TensorFlow and one that uses PyTorch and want to train a model that uses both end-to-end? This library makes it possible without having to rewrite either codebase! It allows you to wrap a TensorFlow graph to make it callable (and differentiable) through PyTorch, and vice-versa, using simple functions.

CodeAndreas Kirsch

Code for BatchBALD blog post and paper (active learning)

This is the code for the blog post Human in the Loop: Deep Learning without Wasteful Labelling and the paper BatchBALD: Efficient and Diverse Batch Acquisition for Deep Bayesian Active Learning.

Code, PublicationAndreas Kirsch, Joost van Amersfoort, Yarin Gal

Reproducing the results from "Do Deep Generative Models Know What They Don't Know?"

PyTorch implementation of Glow that reproduces the results from “Do Deep Generative Models Know What They Don’t Know?” (Nalisnick et al.); Includes a pretrained model, evaluation notebooks and training code!

CodeJoost van Amersfoort

Code for Bayesian Deep Learning Benchmarks

In order to make real-world difference with Bayesian Deep Learning (BDL) tools, the tools must scale to real-world settings. And for that we, the research community, must be able to evaluate our inference tools (and iterate quickly) with real-world benchmark tasks. We should be able to do this without necessarily worrying about application-specific domain knowledge, like the expertise often required in medical applications for example. We require benchmarks to test for inference robustness, performance, and accuracy, in addition to cost and effort of development. These benchmarks should be at a variety of scales, ranging from toy MNIST-scale benchmarks for fast development cycles, to large data benchmarks which are truthful to real-world applications, capturing their constraints.

CodeAngelos Filos, Sebastian Farquhar, Aidan Gomez, Tim G. J. Rudner, Zac Kenton, Lewis Smith, Milad Alizadeh, Yarin Gal

Code for "An Ensemble of Bayesian Neural Networks for Exoplanetary Atmospheric Retrieval"

Recent work demonstrated the potential of using machine learning algorithms for atmospheric retrieval by implementing a random forest to perform retrievals in seconds that are consistent with the traditional, computationally-expensive nested-sampling retrieval method. We expand upon their approach by presenting a new machine learning model, exttt{plan-net}, based on an ensemble of Bayesian neural networks that yields more accurate inferences than the random forest for the same data set of synthetic transmission spectra.

Code, PublicationAdam D. Cobb, Michael D. Himes, Frank Soboczenski, Simone Zorzan, Molly D. O'Beirne, Atılım Güneş Baydin, Yarin Gal, Shawn D. Domagal-Goldman, Giada N. Arney, Daniel Angerhausen

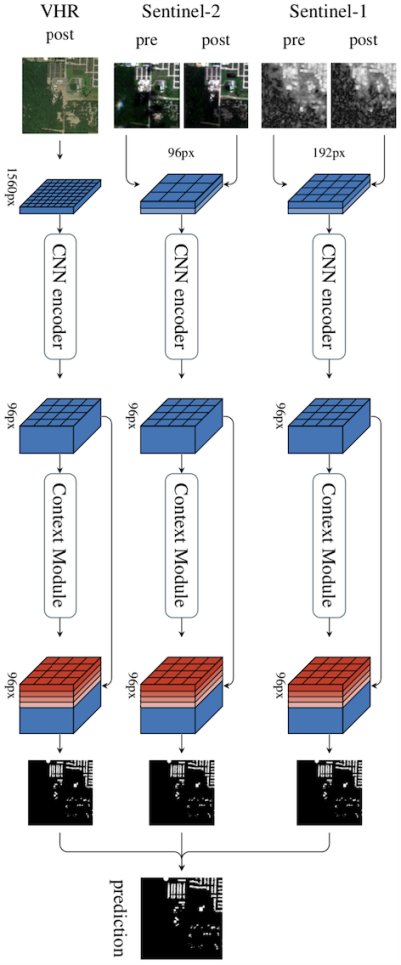

Code for Multi³Net (multitemporal satellite imagery segmentation)

We propose a novel approach for rapid segmentation of flooded buildings by fusing multiresolution, multisensor, and multitemporal satellite imagery in a convolutional neural network. Our model significantly expedites the generation of satellite imagery-based flood maps, crucial for first responders and local authorities in the early stages of flood events. By incorporating multitemporal satellite imagery, our model allows for rapid and accurate post-disaster damage assessment and can be used by governments to better coordinate medium- and long-term financial assistance programs for affected areas. The network consists of multiple streams of encoder-decoder architectures that extract spatiotemporal information from medium-resolution images and spatial information from high-resolution images before fusing the resulting representations into a single medium-resolution segmentation map of flooded buildings. We compare our model to state-of-the-art methods for building footprint segmentation as well as to alternative fusion approaches for the segmentation of flooded buildings and find that our model performs best on both tasks. We also demonstrate that our model produces highly accurate segmentation maps of flooded buildings using only publicly available medium-resolution data instead of significantly more detailed but sparsely available very high-resolution data. We release the first open-source dataset of fully preprocessed and labeled multiresolution, multispectral, and multitemporal satellite images of disaster sites along with our source code.

Code, PublicationTim G. J. Rudner, Marc Rußwurm, Jakub Fil, Ramona Pelich, Benjamin Bischke, Veronika Kopackova, Piotr Bilinski

FDL2017: Lunar Water and Volatiles

This repository represents the work of the Frontier Development Labs 2017: Lunar Water and Volatiles team.

NASA’s LCROSS mission indicated that water is present in the permanently shadowed regions of the Lunar poles. Water is a key resource for human spaceflight, not least for astronaut life-support but also as an ingredient for rocket fuels.

It is important that the presence of Lunar water is further quantified through rover missions such as NASA’s Resource Prospector (RP). RP will be required to traverse the lunar polar regions and evaluate water distribution by periodically drilling to suitable depths to retrieve samples for analysis.

In order to maximise the value of RP and of future missions, it is important for robust and effective plans to be constructed. This year’s LWAV team began by replicating traverse planning algorithms currently in use by NASA JPL. However, when beginning an automated search for maximally lengthed traverses an opportunity became apparent.

Current maps of the Lunar surface are in large composed from optical images captured by the Lunar Reconnaissance Orbiter (LRO) mission. For our study we were largely interested in optical images from the LRO Narrow Angled Camera (NAC), and elevation measures from the Lunar Orbiter Laser Altimeter Digital Elevation Model (LOLA DEM)….

CodeDietmar Backes, Timothy Seabrook, Eleni Bohacek, Anthony Dobrovolskis, Casey Handmer, Yarin Gal