Back to all publications...

On Feature Collapse and Deep Kernel Learning for Single Forward Pass Uncertainty

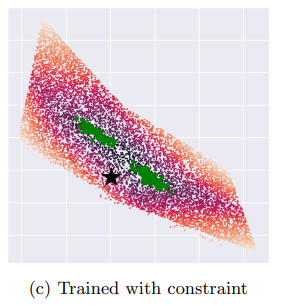

Inducing point Gaussian process approximations are often considered a gold standard in uncertainty estimation since they retain many of the properties of the exact GP and scale to large datasets. A major drawback is that they have difficulty scaling to high dimensional inputs. Deep Kernel Learning (DKL) promises a solution: a deep feature extractor transforms the inputs over which an inducing point Gaussian process is defined. However, DKL has been shown to provide unreliable uncertainty estimates in practice. We study why, and show that with no constraints, the DKL objective pushes “far-away” data points to be mapped to the same features as those of training-set points. With this insight we propose to constrain DKL’s feature extractor to approximately preserve distances through a bi-Lipschitz constraint, resulting in a feature space favorable to DKL. We obtain a model, DUE, which demonstrates uncertainty quality outperforming previous DKL and other single forward pass uncertainty methods, while maintaining the speed and accuracy of standard neural networks.

Joost van Amersfoort, Lewis Smith, Andrew Jesson, Oscar Key, Yarin Gal

arXiv (2022)

[Paper]